Progress today on modeling global daily C19 deaths using a neural network. The key was to experiment with different

activation functions. All previous neural net results for C19 in this blog used

relu activation, but for this application

tanh is far superior. Once that is decided, the issue becomes structure of the neural network -- how many hidden layers and how many neurons per hidden layer. Today we will use one hidden layer.

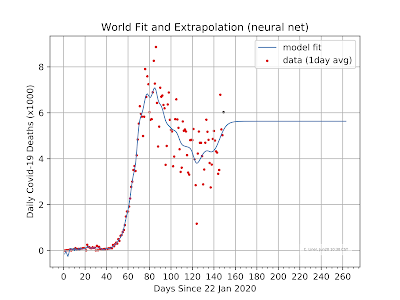

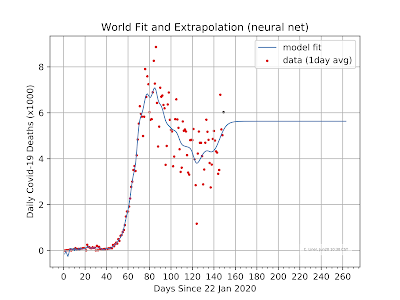

The figures below show results of fitting world daily C19 death data using an sklearn MLPregressor neural net with one hidden layer. The caption of each figure indicates the number of fully connected neurons in the hidden layer: 50, 100, 200, 500. Too few neurons and the fit is blocky, too many and the data is overfit showing many local features. To my eye, the right tradeoff of the cases shown is 200 neurons; the fit line goes about where I would draw it manually in a smooth manner.

Score = 0.9216

Neural Net: MLPRegressor(activation='tanh', alpha=0.0001, batch_size='auto', beta_1=0.9,

beta_2=0.999, early_stopping=False, epsilon=1e-08,

hidden_layer_sizes=(200,), learning_rate='constant',

learning_rate_init=0.001, max_fun=15000, max_iter=2000,

momentum=0.9, n_iter_no_change=10, nesterovs_momentum=True,

power_t=0.5, random_state=None, shuffle=True, solver='lbfgs',

tol=0.0001, validation_fraction=0.1, verbose=False,

warm_start=False)

Total World C19 deaths 459,988

Last five daily = [3508 6786 5274 5022 6024]

Future estimates

4537 = Jul 1 daily (day 161)

4539 = Aug 1 daily (day 192)

4539 = Sep 1 daily (day 223)

Note that all cases have horizontal extrapolation beyond the data, not sure if this is a quirk of the current data points or a general feature.

|

Result using 50 neurons in the hidden layer

|

|

100 neurons

|

|

200 neurons

|

|

500 neurons

|